Then there were the usual thoughtful WUWT responses

When are the legal people going to be brought into this?

Someone should go to jail if this is tampering as it appears.

we’ve still a bunch of scumbags on this earth pretending that a dynamic history is OK

etc. So what happened

No, history was not rewritten. What the folks there don't seem to want to acknowledge is that GHCN circulates two files, described here. The file everyone there wants to focus on is the adjusted file (QCA). This, as explained, has been homogenized. This is a preparatory step for its use in compiling a global index. It tries to put all stations on the same basis, and also adjust them, if necessary, to be representative of the region. It is not an attempt to modify the historical record.

That record is contained on the other data file distributed - the unadjusted QCU file. This contains records as they were reported initially. It is generally free of any climatological adjustments. For the last 15 or so years, Met stations have submitted monthly CLIMAT forms. You can inspect these online. Data goes straight from these to the QCU file, and will not change unless the Met organisation submits an amended CLIMAT file. This is the history, and no-one is tampering with it.

The adjusted file does change, as the name suggests it may. Recently, it has been modified to use an improved pairwise comparison homogenization algorithm due to Menne and Williams. It is now (as of Dec 15 2011) used by GISS instead of their own homogenization algorithm, which makes the QCA file much more significant.

Update. I have a new post which looks at the GHCN adjustments more generally, with visualization.

The Iceland Met Office record

So Paul raised this with the Icelandic Met Office, with the following loaded questions:a) Were the Iceland Met Office aware that these adjustments are being made?

No we were not aware of this.

b) Has the Met Office been advised of the reasons for them?

No, but we are asking for the reasons

c) Does the Met Office accept that their own temperature data is in error, and that the corrections applied by GHCN are both valid and of the correct value? If so, why?

The GHCN “corrections” are grossly in error in the case of Reykjavik but not quite as bad for the other stations. But we will have a better look. We do not accept these “corrections”.

d) Does the Met Office intend to modify their own temperature records in line with GHCN?

No.

And the Met sent their own version of the Reykjavik data. But what is missing from this dialog is that GHCN was never altering their QCU record, and is not suggesting that the Iceland records should be changed. I'll show the QCU data for the period most complained of, post 1939. Units are .01°C. Unfortunately, the Dec numbers got lost in formatting.

| Year | Jan | Feb | Mar | Apr | May | Jun | Jul | Aug | Sep | Oct | Nov |

| 1937 | 80 | -160 | -130 | 500 | 690 | 960 | 1160 | 1040 | 860 | 400 | 360 |

| 1938 | 10 | 180 | 200 | 470 | 600 | 920 | 1130 | 1060 | 940 | 490 | 170 |

| 1939 | -150 | 130 | 360 | 500 | 870 | 1080 | 1300 | 1230 | 1180 | 740 | 150 |

| 1940 | 160 | 170 | -20 | 300 | 760 | 970 | 1120 | 1010 | 710 | 630 | 130 |

| 1941 | -30 | -150 | 210 | 540 | 880 | 1150 | 1230 | 1150 | 1150 | 710 | 480 |

| 1942 | 160 | 190 | 270 | 360 | 770 | 970 | 1140 | 1130 | 790 | 260 | 410 |

| 1943 | 40 | -90 | 150 | 290 | 500 | 990 | 1140 | 990 | 810 | 450 | 200 |

| 1944 | -170 | 60 | 140 | 410 | 660 | 990 | 1300 | 1180 | 800 | 460 | 10 |

| 1945 | -260 | 10 | 410 | 440 | 760 | 980 | 1190 | 1200 | 960 | 720 | 650 |

| 1946 | 240 | -20 | 280 | 320 | 840 | 870 | 1060 | 1060 | 800 | 760 | 140 |

| 1947 | 320 | -200 | -280 | 170 | 830 | 990 | 1090 | 1100 | 770 | 560 | 30 |

| 1948 | 70 | 200 | 320 | 120 | 460 | 940 | 1060 | 1100 | 620 | 310 | 250 |

| 1949 | -270 | 0 | 10 | 0 | 360 | 980 | 1050 | 1010 | 900 | 450 | 250 |

| 1950 | 280 | -70 | 130 | 220 | 710 | 930 | 1240 | 1220 | 720 | 450 | 70 |

| 1951 | -150 | -90 | -300 | 0 | 690 | 980 | 1060 | 1140 | 970 | 570 | 90 |

| 1952 | -290 | -30 | 100 | 340 | 620 | 830 | 1010 | 1010 | 760 | 570 | 260 |

| 1953 | 60 | 180 | 270 | 10 | 680 | 1020 | 1190 | 1150 | 970 | 420 | 200 |

| 1954 | 200 | 10 | 160 | 450 | 760 | 1020 | 1060 | 1100 | 610 | 370 | 270 |

| 1955 | -200 | -270 | 140 | 560 | 570 | 990 | 1050 | 1010 | 810 | 390 | 430 |

| 1956 | -320 | 270 | 360 | 360 | 660 | 840 | 1070 | 990 | 930 | 470 | 500 |

| 1957 | 110 | -150 | 40 | 500 | 700 | 990 | 1200 | 1130 | 710 | 470 | 300 |

Actually there are isolated differences. These seem to be sign differences, where GHCN has a negative sign. The sign may have disappeared in scanning the Iceland numbers.

The story of the Reykjavik adjustments was taken up by Ole Humlun, with again the same disdain for the record contained in the GHCN QCU file and the purpose of the homogenization adjustment. He refers to the Iceland data, and to Rimfrost, but does not mention the almost identical QCU.

The V3.1 adjustments

It is true that the adjustments have changed recently. V3.1 was released 4 November 2011. I have a QCA file from 14 July 2011, and this is, for Reykjavik, almost identical to the QCU file. I have a v2.mean file from Dec 2009, which is the V2 unadjusted file, and it is essentially identical to the current QCU file. In v2 style, it had duplicates. there were four for Reykjavik, but they had little overlap and where they did, were consistent. The unadjusted file has not changed, but the QCA file has.I also have an adjusted v2 file from Dec 2009. The adjustments are substantial, but less than the current ones.

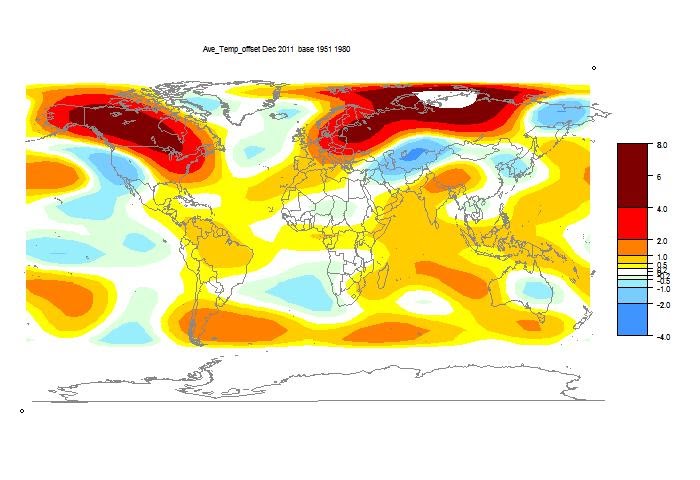

So here is the plot of the current QCU file vs the adjusted QCA file:

And here is the plot of differences

As you see, the adjustments are substantial. I have done a repeat of the GHCN analysis that we did for Darwin, with a histogram of the effect on trends. I will blog about this shortly. Current estimate is that this adjustment is in the top 10%.