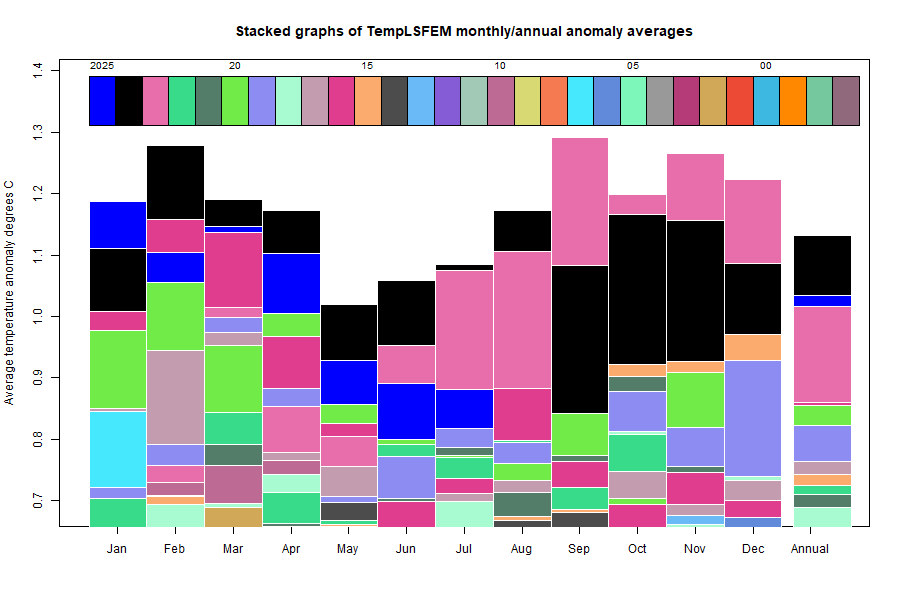

The TempLS FEM anomaly (1961-90 base) was 0.886°C in January, up from 0.845°C in December.

There was again a strange sequence in the GHCN V4 monthly reports. Until last August, by about 6th of the month I would have about 3200 or so land stations reporting outside the US (ie ROW), which was enough to publish. After August, data was much more sketchy, with countries like China missing, and struggling to reach about 2600 stations. By about 10th month, I could get a reasonable result with merging JMA (Japan) data.

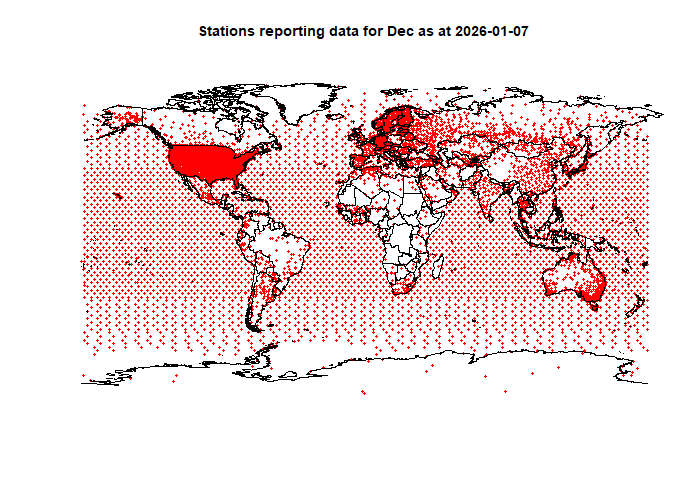

In January, there was suddenly an early flush of ROW data, even more than before August. But this month, by the 7th, there were only 1164 ROW stations. Then the situation improved, and now there are 3194, almost back to the old days. So I have confidence in publishing.

However, I looked into using GHCN Daily data, which is where GHCN-M comes from. I found, when GHCN-M had only 1164 stations, that the number of monthly averages I could get from GHCN-D was very dependent on how many days I insisted the month should have. 25 did not do much better than 1100, but if I relaxed to 20, I could get up to 3000. I presume that the last days of the month are coming in slowly.

So I may switch to daily to get a better idea of what is happening, and maybe post earlier. However, for now I am still using GHCN-M (+JMA)/

Here is the temperature map, using the FEM-based map of anomalies. Use the arrows to see different 2D projections.

As always, the

3D globe map gives better detail. There are more graphs and a station map in the

ongoing report which is updated daily.