I've been experimenting with Steven Mosher's R package

RghcnV3. It has many useful features, and I think the R package format serves it well. I'm not as enthusiastic as Steven about the zoo and raster structures, at least for this purpose, but still, I may yet be convinced.

Anyway, it certainly gets some of the messy initial data structuring out of the way. So since I am currently working on Ver 2.2, I thought I would put together a very simple version using some of the more recent ideas, in the RghcnV3 style.

Update - I've added a version of the code with detailed comments at the end.

TempLS basics

Firstly, a reminder of how TempLS works. After data rearrangement, we have an array of temperature readings, over some number S of stations, and over M months (usually 12, but can do seasons etc) and Y years. This is a SxMxY array with many missing values.

I'll explain the math in a similar style to

this account of V2. I'll use a notation, described there, in which arrays (matrices etc) are represented with suffixes, and equations including them are true for the natural range of values. To save summation signs, I use a

summation convention in which a repeated index is assumed to be summed over the range. But to emphasise that, I use colors. Summed indices are blue, independent ones are red. There are some I need to exempt from the summation rule, and these are green.

So we assume that the observed temps x can be modelled with

- a local temperature L, which varies over station and months, but not over years. Think of it as a monthly average, and

- a global temperature G uniform over months and stations, but varying with years. This corresponds to the global anomaly that we are after.

So we write a model equation

x

smy ~

L

sm +

G

y

I'll repeat here the least squares part from that earlier post. It's useful to maintain a convention that every term has to include every red index. So I'll introduce an identity I

y, for which every component is 1, and rewrite the model as

x

smy ~

I

yL

sm +

I

smG

y

Weighted least squares.

The L and G are parameters to be fitted. We minimise a weighted least squares expression for the residuals. The weight function w has two important roles.

- It is zero for missing values

- It needs to ensure that stations make "fair" contributions to the sum, so that regions with many stations aren't over-represented. This means that the sum with weights should be like a numericam integral over the surface. w should be inversely proportional to density.

So we want to minimise

w

(smy)(x

smy - I

yL

sm - I

smG

y)

2

Differentiation

This is done by differentiating this expression wrt each parameter component. In the summation convention, when you differentiate a paired index, it disappears. The remaining index goes from blue to red. The original scalar SS becomes a system of equations, as the red indices indicate:

| Minimise: | w(smy)(xsmy - IyLsm - IsmGy)2

|

| Differentiate wrt L: | w(smy)Iy(xsmy - IyLsm - IsmGy)

|

| Differentiate wrt G: | w(smy)Ism(xsmy - IyLsm - IsmGy)

|

Notice what we have here - linear equations in L and G, and all we need is the data x and the weights w.

Equations to be solved

These are the equations to be solved for L and G. It can be seen as a block system:

A*L + B*G = X1

B

T*L + C*G = X2

combining s and m as one index, and where

T signifies transpose. Examining the various terms, one sees that A and C are diagonal matrices, so the overall system is symmetric.

The first equation can be used to eliminate L, so

(C - B

TA

-1B)*G = X2 -

B

TA

-1X1

This is not a large system - G has of order 100 components (years).

Implementation with RghcnV3

There are just three steps. We have to get the array x. RghcnV3 can get us (as explained in the

manual as far as the output of readV3Data(). This is a large array ordered first by months (in rows), then by year, then by station. There is then a function v3ToZoo() in V1.3) which should get it into a structured array. Unfortunately, I ran out of memory at that stage. But the further rearrangement was a basic step in TempLS, so I used the sortdata() routine to do that. The arguments are the data array, and a vector with the start and end year of the period you want to look at.The resulting x array is ordered as suggested above, by station, month and year.

Weighting

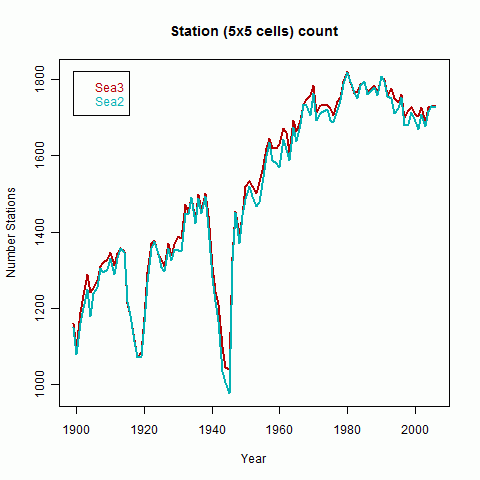

Then we need a weight function w. A basic method is to divide the surface into 5x5 lat/lon cells, and get a station density by dividing the area of each cell by the number its stations reporting in each month. This is done in the routine makeweights, which returns the array w.

Solving

So that's it. We have the needed w and x; the rest is just some summing over rows etc to get the block matrices, and then a block inversion. This is done in the routine solveTemperature, which returns the temperature anomalies about a mean zero. I've added a plot statement.

The code

As promised, just three routines. Start with dat, the array from readV3Data(), and your choice of years yrs[1:2]. There is another version with detailed comments below:

sortdata = function(v,yrs=c(1900,2010)) # Sorts data from v2.mean etc into matrix array x

{

nd=dim(v)[1];

y0=yrs[1]-1;

iq=c(0,which(diff(v[,1])>0),nd);

jq=length(iq)-1;

x=array(NA,c(jq,12,yrs[2]-y0));

for(i in 1:jq){ # loop over stations

kq=c(iq[i]+1,iq[i+1]);

iy=as.matrix(v[kq[1]:kq[2],]);

oy=(iy[,2] > y0) & (iy[,2]<=yrs[2]);

if(sum(oy)<2)next;

iz= iy[oy,];

x[i,,iz[,2]-y0] = t(iz[,3:14]);

}

x;

}

Then the routine to make the weights - needs x and lat/lon:

makeweights = function( x, inv)

{

d = dim(x); wt = array(NA,d);

ns = floor(inv$Lat/5)*72 + floor(inv$Lon/5) + 1333;

wtt = sin((1:36-0.5)*pi/36);

w_area = rep(wtt,each=72);

for(i in 1:d[2]){

for(j in 1:d[3]){

ow = !is.na(x[,i,j]);

n=ns[ow];

kc=tabulate(c(n,72*36));

ok=kc>0; wc=w_area;

wc[ok]=w_area[ok]/kc[ok];

wt[ow,i,j]= wc[n];

}

}

wt;

}

Finally, the solve routine:

solvetemperature=function(wt,x)

{

wt[is.na(wt)]=0;x[is.na(x)]=0;

x=x*wt; d=dim(x); d=c(d[1]*d[2],d[3]);

dim(x)=dim(wt)=d;

A=rowSums(wt)+1.0e-9;

R1=rowSums(x);

C=colSums(wt);

R2=colSums(x);

aw=array(rep(1/A,d[2])*wt,d);

b = drop(R2-R1 %*% aw);

S=diag(C) - t(wt) %*% aw;

S[1,1]=S[1,1]+1; # there is a dof to be fixed

y=solve(S,b);

y-mean(y);

}

And the program to run it all:

x=sortdata(dat,yrs);

wt=makeweights(x,inv);

T=solvetemperature(wt,x);

png("TempLSv0.png",width=500);

plot(yrs[1]:yrs[2],T,type="l");

dev.off()

And a (no-frills) result:

Here is a version of the code above with line by line comments.

#You'll want your own version of yrs

sortdata = function(v,yrs=c(1900,2010))

# Sorts data from v2.mean etc into matrix array x.

#v is just the v3.mean file read in - one year per row, one station block after another. Within a block, the years are in order.

{

nd=dim(v)[1];

# Number of stations in inventory

y0=yrs[1]-1;

# Year zero

iq=c(0,which(diff(v[,1])>0),nd);

# Endpoints of each station block of data (where the stat num ber increments)

jq=length(iq)-1;

# Number of stations in data set

x=array(NA,c(jq,12,yrs[2]-y0));

# setting up the big temp matrix

for(i in 1:jq){

# loop over stations

kq=c(iq[i]+1,iq[i+1]);

# marks the block of station i

iy=as.matrix(v[kq[1]:kq[2],]);

# copies that block of info

oy=(iy[,2] > y0) & (iy[,2]<=yrs[2]);

# selects the years within range

if(sum(oy)<2)next;

# reject stations with less than a year of data (can't get an anomaly with 1 dof)

iz= iy[oy,];

# the selected block

x[i,,iz[,2]-y0] = t(iz[,3:14]);

# copy into x

}

x;

# return x

}

#;

makeweights = function( x, inv)

# To return wt, a matrix with the same structure as x

#ie a weight for every station/month/year, but zero or NA if missing

{

d = dim(x); wt = array(NA,d);

# set up the big blank

ns = floor(inv$Lat/5)*72 + floor(inv$Lon/5) + 1333;

# ns lists a (5x5 degree) cell number for every station in the inventory

wtt = sin((1:36-0.5)*pi/36); w_area = rep(wtt,each=72);

# w_area is an area weight for each cell

for(i in 1:d[2]){

# loop over months

for(j in 1:d[3]){

# loop over years

ow = !is.na(x[,i,j]);

# which stations reported in i/j

n=ns[ow];

# and what were their cell numbers

kc=tabulate(c(n,72*36));

# frequency of cell numbers

ok=kc>0;

# logical for empty cells (that month)

wc=w_area;

#Initialize the weights to the areas

wc[ok]=w_area[ok]/kc[ok];

# now divide the weights by number of stats, to get inverse density

wt[ow,i,j]= wc[n];

# set the weights for that i,j

}

}

wt;

# return the big weight matrix

}

#Now we have the weights wt and temp matrix x, can solve linear systen for temp anomaly

solvetemperature=function(wt,x)

{

# to avoid NA fuss, missing readings have zero weight, so temp doesn't matter

wt[is.na(wt)]=0;x[is.na(x)]=0;

# redimension to a matrix with s&m vs year

x=x*wt; d=dim(x); d=c(d[1]*d[2],d[3]);

dim(x)=dim(wt)=d;

A=rowSums(wt)+1.0e-9;

# Top left diag block matrix

# There can be missing lines with all zero. Instead of eliminating them, just add something to the diag to avoid a 0/0 error. No effect on result.

R1=rowSums(x);

# Corresponding RHS

C=colSums(wt);

#Bottom right diag matrix

R2=colSums(x);

# Corresponding RHS

aw=array(rep(1/A,d[2])*wt,d);

# LHS term after dividing by A to eliminate L

b = drop(R2-R1 %*% aw);

# Elimination op for RHS

S=diag(C) - t(wt) %*% aw;

# Local temp has now been eliminated, along with variables s and m.

#Now the equation is just in yearly T anomalies over years. Symmetric.

# S is singular, because an arbitrary constant could be added to G and subtracted from L.

# So arbitrarily set anomaly 1 to zero

S[1,1]=S[1,1]+1;

# Now solve - S could be up to 150x150 say (yr[2]-yr[1])

y=solve(S,b);

# Now reset to mean zero (fixing that arbitrariness)

y-mean(y);

# zero mean anomaly returned.

}

# End functions.

# Execution You start with v from data file (readV3Data()) and inv from inventory (readInventory())

# All you need from inventory is lat/lon

x=sortdata(dat,yrs);

# Many other makeweights() are possible

wt=makeweights(x,inv);

T=solvetemperature(wt,x);

# A simple plot on a png file; comment out to see on screen (also dev.off())

png("TempLSv0.r");

plot(yrs[1]:yrs[2],T,type="l",xlab="Year");

dev.off();