Unusually, the Arctic was mostly cool. Cold in N Russia, extending through Europe to Spain. To the south of that cold, a warm band from China to the Sahara, which was probably responsible for the net warmth. US was patchy, but more cool than warm. Interactive map here.

On prospects, the BoM says that ENSO is neutral, with neutral prospects. Currently SOI looks Nina-ish, but BoM says that is due to cyclones and will pass.

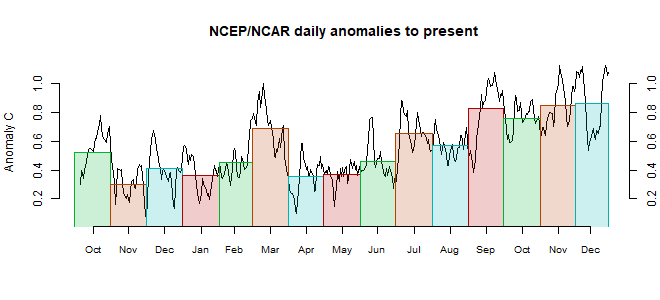

This post is part of a series that has now run for some years. The NCEP/NCAR integrated average is posted daily here, along with monthly averages, including current month, and graph. When the last day of the month has data (usually about the 3rd) I write this post.

The TempLS mesh data is reported here, and the recent history of monthly readings is here. Unadjusted GHCN is normally used, but if you click the TempLS button there, it will show data with adjusted, and also with different integration methods. There is an interactive graph using 1981-2010 base period here which you can use to show different periods, or compare with other indices. There is a general guide to TempLS here.

The reporting cycle starts with a report of the daily reanalysis index on about the 4th of the month. The next post is this, the TempLS report, usually about the 8th. Then when the GISS result comes out, usually about the 15th, I discuss it and compare with TempLS. The TempLS graph uses a spherical harmonics to the TempLS mesh residuals; the residuals are displayed more directly using a triangular grid in a better resolved WebGL plot here.

A list of earlier monthly reports of each series in date order is here:

The TempLS mesh data is reported here, and the recent history of monthly readings is here. Unadjusted GHCN is normally used, but if you click the TempLS button there, it will show data with adjusted, and also with different integration methods. There is an interactive graph using 1981-2010 base period here which you can use to show different periods, or compare with other indices. There is a general guide to TempLS here.

The reporting cycle starts with a report of the daily reanalysis index on about the 4th of the month. The next post is this, the TempLS report, usually about the 8th. Then when the GISS result comes out, usually about the 15th, I discuss it and compare with TempLS. The TempLS graph uses a spherical harmonics to the TempLS mesh residuals; the residuals are displayed more directly using a triangular grid in a better resolved WebGL plot here.

A list of earlier monthly reports of each series in date order is here:

Nick,

ReplyDeleteGreat informative site for those us who love meteorology, climatology, math, other STEMs, communicating science and knew giants such as Helmut Landsberg and Murray Mitchell. But what are you doing now reporting anomalies to nearest 1/1000th? The global anomaly is now 0.046° not 0.045637° rounded to 0.046°? Why create some value not measurable? A mathematical trick? If global temperature is indeed now possible to measure + - 0.1° (depending on measurement/instrument), what point is served by by a mathematical trick to announce, "Anomaly is up by 0.001°" Yes, the decadal global changes, trends and changes in Earth environment and weather/climate patterns and understanding the drivers are huge great advances in science and indeed the big critical stories. Not a 0.001° "anomaly up" at the end of the month. Isn't that headline just fodder for non-believers? Next month "Anomaly up by 0.0001°" That is not the story. Yikes!!

Anon,

DeleteThanks. On rounding, I have never been impressed by arguments that calculated numbers should be rounded to the uncertainty. One thing is for sure - rounding loses information, even if you think it wasn't worth much. And there are various levels of uncertainty. As I propound from time to time, uncertainty is properly quantified as the range of values a number might take if it had been derived differently. And there are various things a reader might include in what might be allowed to differ.

For example, the commonly quoted uncertainty of a trend is the variability you might experience had the weather been different. For global temperature, a big component is spatial - what if you had measured in different places. That is masked a bit with reanalysis where the numbers are presented on a grid. One thing I am often checking with TempLS is the variability you might find as stations report. The third decimal place is significant here. I also find the third place useful in checking the numerics of integration (eh here).

So in short, I think three figures can convey useful information, even if with the uncertainties you have in mind, fewer would do. So I give them, and let the reader sort it out.

Getting precise month to month measurements of ENSO indices are incredibly important. As it turns out, increased precision in the ENSO value and also in temporal resolution allow us to improve the lunar forcing models

Deletehttps://imageshack.com/a/img922/5696/npwwAp.png

Time for all measurements to be reported on a finer scale than monthly as the trend in findings

is that more of the climate behaviors are found not to be random. See https://eos.org/editors-vox/coupled-from-the-start

Take a look at this figure which is an extreme overfit to an ENSO interval from 1910-1920 highlighted in yellow.

Deletehttps://imageshack.com/a/img922/7177/NJaB2K.png

This is overfit to an R=0.977 even though it is obvious that ENSO indices such as SOI and NINO34 contain significant noise.

Yet despite the overfit within this short interval (consisting of essentially 4 known orthogonal gravitational cycles), the extrapolated match over the remaining range is obviously statistically significant. The correlation does not appear to gradually reduce away from the training interval indicating that the underlying model is deterministic and not stochastic.

There is a way to add varying amounts of random noise to the data set to determine the robustness of the fitting technique.

So in this case, high resolution, high precision data with low measurement noise is just as important for climate modeling as it is for refining orbital ephemeris parameters.

Nick Stokes: "On rounding, I have never been impressed by arguments that calculated numbers should be rounded to the uncertainty."

DeleteI agree. I think it's not a good argument.

Round at the end, if you wish. Don't use the number of displayed digits to represent your estimate of uncertainties---it's not even typical that the actual uncertainty in the number is ±0.5 of the smallest digit in any case.

I like to display numbers to two digits for the uncertainty. E.g., 1.53 ±0.25 not 1.5 ±0.3. The p value you'd infer from 1.53 ± 0.25 is very different than from 1.5 ± 0.3, so you really are throwing away information.

Some extreme warmth in Antarctic over recent weeks. Looking likely to be the warmest Antarctic April in the NCEP record. Sea ice is responding in kind. Having moved from lows back towards the median in March, it's now heading back out towards record lows at quite a pace.

ReplyDeleteGlobally, April is warm so far, based on NCEP/NCAR. It could be the warmest month of the last 12.

Delete